Migrate Your Data to S/4 HANA Effortlessly with BODS RDS Jobs

08 October 2020

This blog will provide an overview about the Data Migration process in S/4 HANA. In this blog I have considered the loading of data to S/4 HANA on Premise edition. The Data Migration tools used should allow the migrating of both master and transactional data from any SAP or non-SAP system to an S/4 HANA system. This solution is designed and developed for S/4 HANA implementation projects only. This Blog will cover the options available in SAP BODS.

- Data is an individual unit that contains raw value which does not carry any specific meaning by itself.

- Information is a processed, organized data presented in each context and is useful for decision making.

- Information is a group of data that collectively carry a logical meaning.

Data migration – what is it?

Data migration is the process of selecting, preparing, extracting, and transforming data and permanently transferring it from one computer storage system to another. Additionally, the validation of migrated data for completeness and the decommissioning of legacy data storage are considered part of the entire data migration process

Data migration is the process of moving data from one location to another, one format to another, or one application to another. Generally, this is the result of introducing a new system or location for the data.

Data Migration – Objective

Objective of Data Migration is to ensure that the legacy data is extracted, cleansed, transformed and a data of high quality suitable for all the business use is moved to the new system.

- Standards should be defined for Data cleansing, Data mapping, Data Transformation and Data Enrichment.

- Identify the Data Owners / stakeholders and ensure they are actively engaged in the course of the data migration project.

- A tool required to mechanize the execution of mapping of data, data transformation, and data quality responsibilities in an organized and successive manner.

- Ensure proper Data suitable for business requirements are provided at the end of Data migration

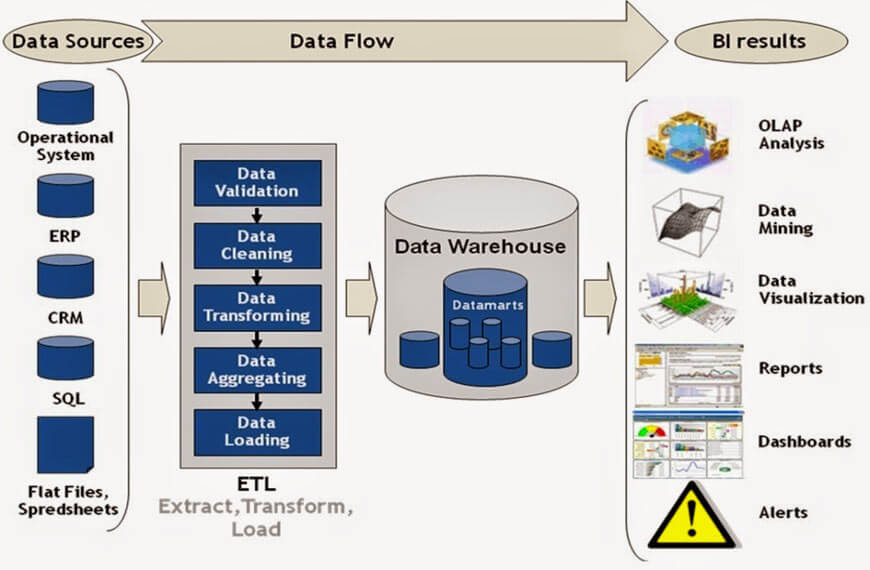

ETL Extract, Transform and Load

- ETL stands for Extract-Transform-Load, and it is a process of how data is loaded from source system to a target system. Data is extracted from a database and transformed to match according to the schema. After the transformation, the data is loaded into the target system.

Sap Data Services (BODS)

SAP BODS is an ETL tool by SAP which can extract data from disparate systems, transform them into meaningful information and load them in various kinds of systems. SAP BODS is Business Objects Data Services. It is designed to deliver an enterprise-class solution for data integration, data quality, data processing, data profiling, and text data. SAP Data Services is one of the main tools recommended and fully supported for the purposes of data migration to SAP S/4HANA (on-premise). Following the SAP best practices, the data migration approach is defined for migration using BODS.

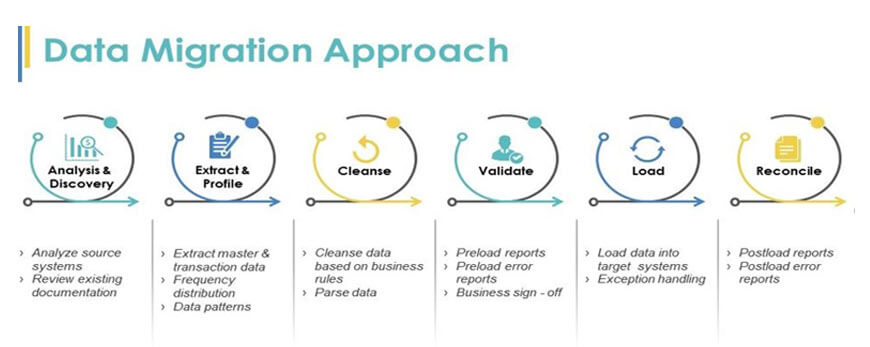

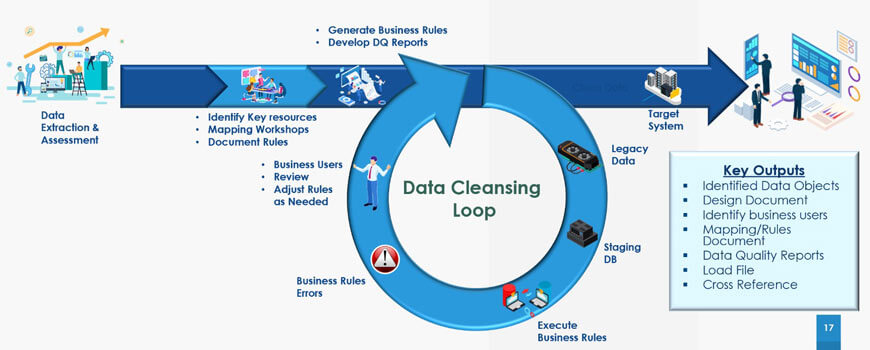

Data Migration Approach

- Analyze to understand the data that is going to migrate. Analyze if the data is required for the target system, if all the required fields have the values. Find out what data is not required to be migrated

- Considering the details from the Analyze phase, the data to be pulled over from source is decided and extraction is done. You can begin to define timelines and any project concerns. By the end of this step, the whole project should be documented. The Data profiling is done to identify the data pattern, data count, data range, frequency distribution etc...

- Data cleansing is done based on the mapping rules. Various types of cleansing is done to make the data robust and suitable for business requirement

- Preload validation is done, and quality check and error reports are generated. Reports are validated and business sign off is obtained prior to load

- Load the data prepared using the mapping rules

- Extract the loaded data and reconcile it with the load file. The post load quality check and error reports are generated and presented to the stake holder.

Analyze and Discover

- Analyze the existing source system

- Review the existing documentation

- Gather relevant Metadata, that gives information about real data

- Structural meta data - Table, column, pages, keys , index

- Descriptive meta data – Author, date, created by, modified by, file size, value

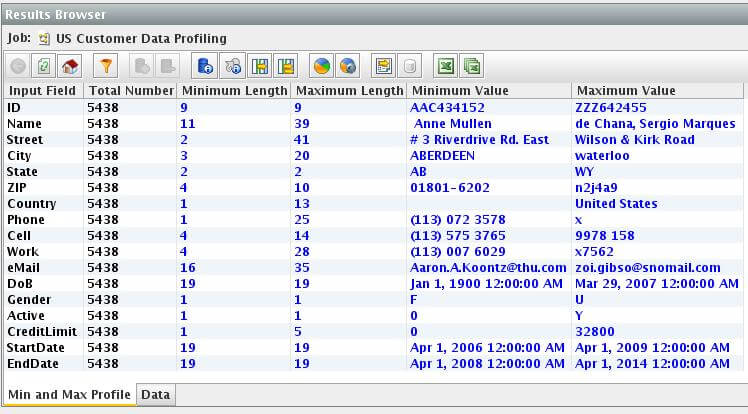

Extract And Profile

- Extract Master and Transaction data

- Column profiling – helps in analyzing overall distribution of data in a table column. Count number of values in each column.

Range, mean , median, mode, count, presence, absence Dependency, uniqueness, Redundancy analysis, Frequency distribution

- Data Patterns

- Data quality analysis

Transformation

- Data extracted from the sources is compiled, converted, reformatted, and cleansed in the staging area to be fed into the target database in the next step.

- The transformation step involves executing a series of functions and applying sets of rules to the extracted data, to convert it into a standard format to meet the schema requirements of the target database.

- The level of manipulation required in ETL transformation depends solely on the data extracted and the needs of the business. It includes validation of data as well as rejection if they’re not acceptable.

- Quality data sources won’t require many transformations, while other datasets might require it significantly. To meet technical and business requirements of your target database, you can subject it to several transformation techniques.

Cleanse

- Cleanse the data based on business rules

- Parse data – Analyse the syntax of data structures. Data can be mapped into table format with header, rows, columns and specified fields are extracted

- Match , Merge and Deduplicate the data

- Replication – Create something from the same blueprint

- Duplication – Make an exact copy

- Deduplication – Remove the duplicates or recurring occurrences. This is also called single instance storage

- Manual Cleansing

- Enrichment

Validate, Load and Reconcile

Validate with

- Preload Reports

- Error Reports

- Get the business signoff after validation of the reports

Load

- Load Data into Target

- Exception Handling

Reconcile

- Post load reports

- Post load error reports

- Get the business signoff after validation of the reports

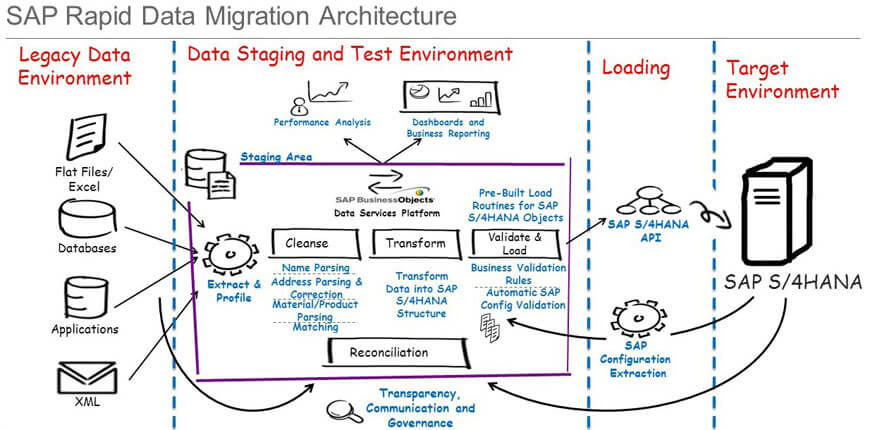

Rapid Data Migration Architecture

The architecture and scope of this solution is visualised in the diagram below:

Rapid Data Migration

SAP has provided the Best Practice for “rapid data migration to SAP S/4HANA (on premise)” available at https://support.sap.com/en/my-support/software-downloads.html

- Installation and Upgrades

- Software Download

- By Category

- SAP Rapid Deployment solutions

- Rapid Data Migration For Sap S/4hana, On Premise Edition

The ATL files are downloaded and imported into BODS. The jobs and Lookups are created in BODS.

The content delivered with Best Practice includes:

- Detailed documentation for technical set-up, preparation and execution of migration for each supported object and extensibility guide.

- SAP Data Services (DS) files, including IDoc status check and Reconciliation jobs.

- IDoc mapping templates for SAP S/4HANA (in MS Excel).

- Lookup files for Legacy to SAP S/4HANA value mapping.

- Migration Services Tool for value mapping and lookup files management.

SAP Data Services use IDocs to post data into SAP S/4HANA. Configuration on the SAP S/4HANA target system is a common requirement for all migration objects.

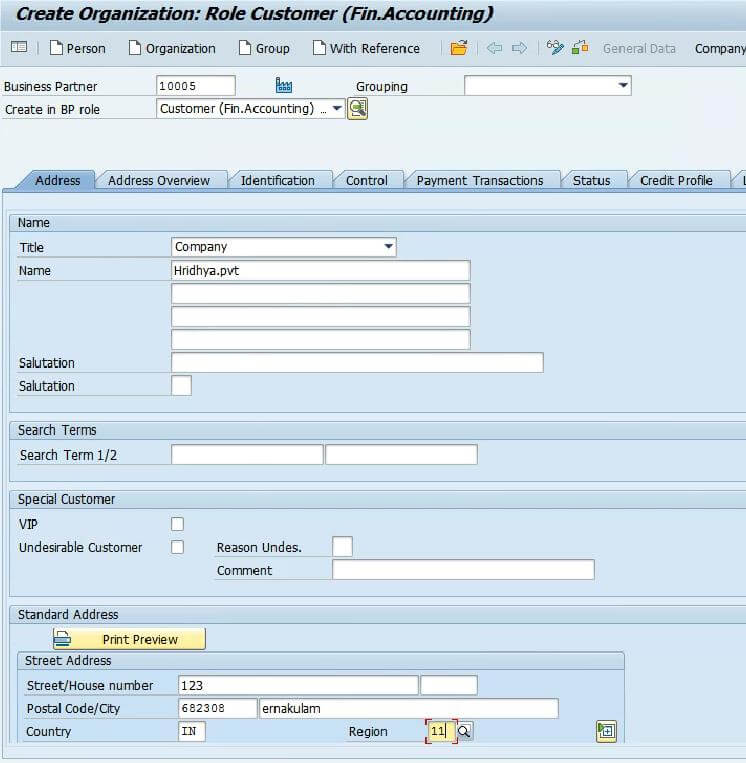

RDS Example – Customer Master

- The customer master database contains the information about the customers and this information is stored in individual customer master records in SAP. For each customer there should be a number to maintain corresponding details of customer.

- The data in customer master records enables to control how the transactions data is to be posted and processed for a customer. Master records are divided into the following areas. Some are

- General data

- Company code data

- Sales area data

Table used in customer master

- Basic Data : KNA1

- Sales Data : KNVV

- Partner Roles : KNVP

- Company Code : KNB1

- Tax Indicators Data : KNV1

- Reminder Data : KNB5

- Bank Data :KNBK

- Contact Data : KNVK

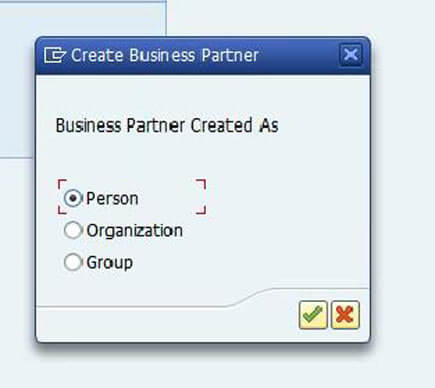

Create Customer Using T-Code

- Step 1 : Use T-Code VD01

- Step 2 : select person , organization or Group

- Step 3: Fill the mandatory field

- Step 4 : Save

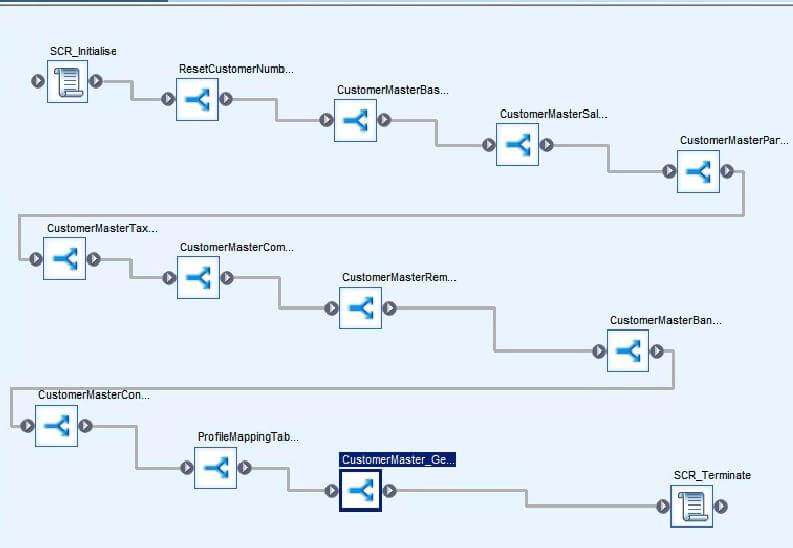

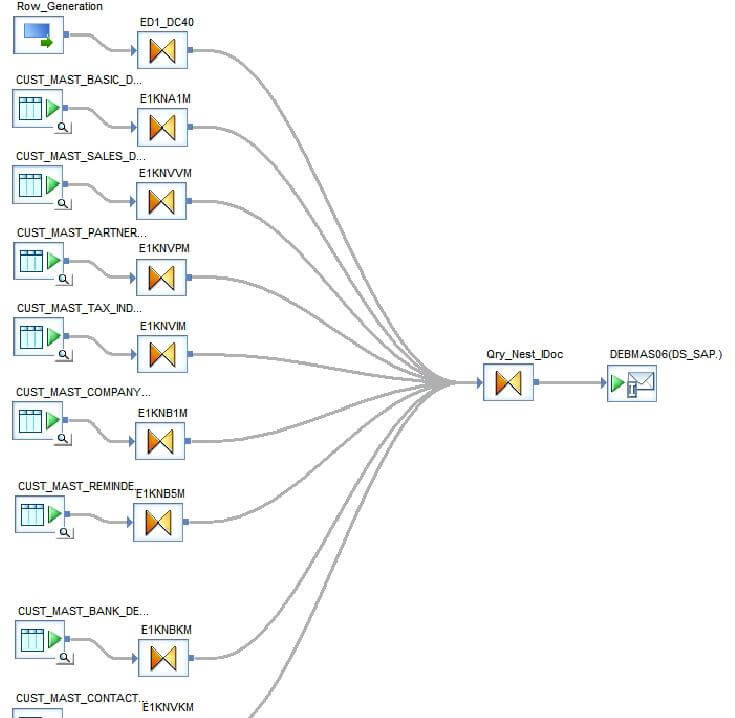

RDS Job in BODS

- Workflow of customer master

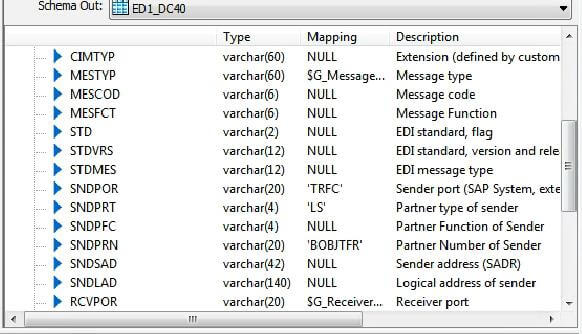

- IDOC used for customer master id DEBMAS06

The source data is separated into each of these required tables. The Query generator fills the segments and pass it to the IDOC.

- Before loading IDOC sender port, receiver port, port number should be added.

IDOC Configurations in SAP

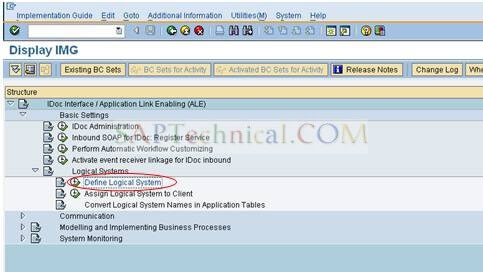

Define Logical System

Login to the SAP system and go to tcode SALE.

Click on Basic settings > Logical system > Define logical system.

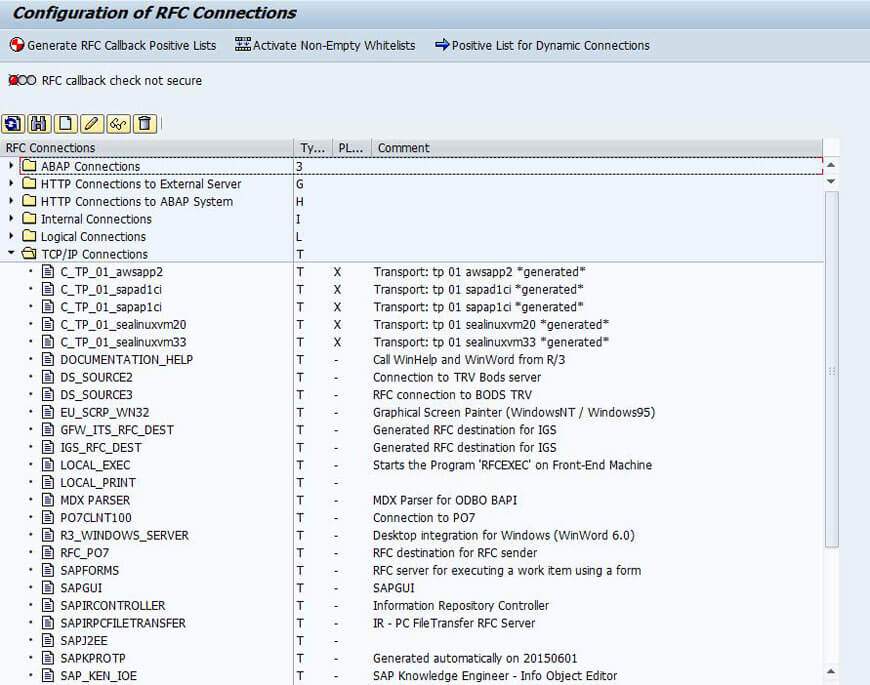

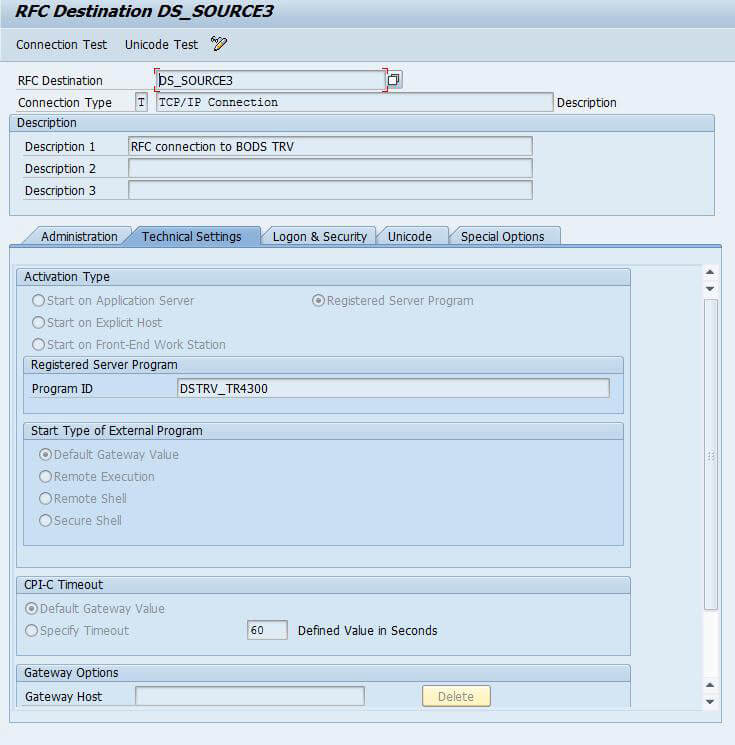

Go to Tcode- SM59 and configure the RFC destination in the SAP system.

Now run the connection test to make sure the RFC connection is working fine

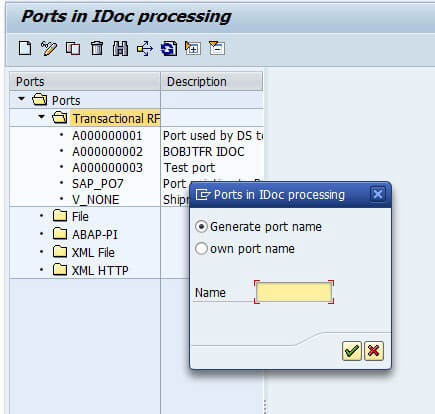

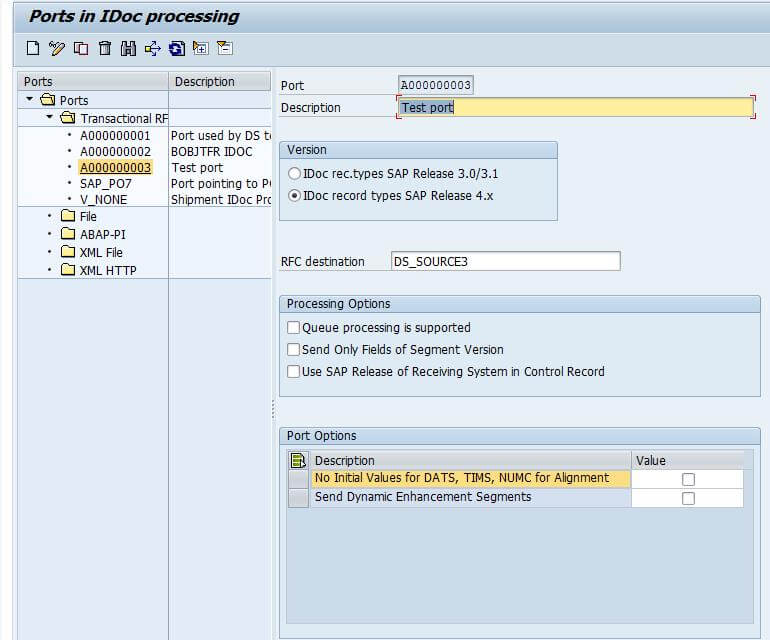

Define RFC port

Go to Tcode - WE21 and create a new port (TRFC) by providing the RFC destination. To create the port, first click on Transactional RFC and then click on create button.

We can either use a system generated name or use a own port name.

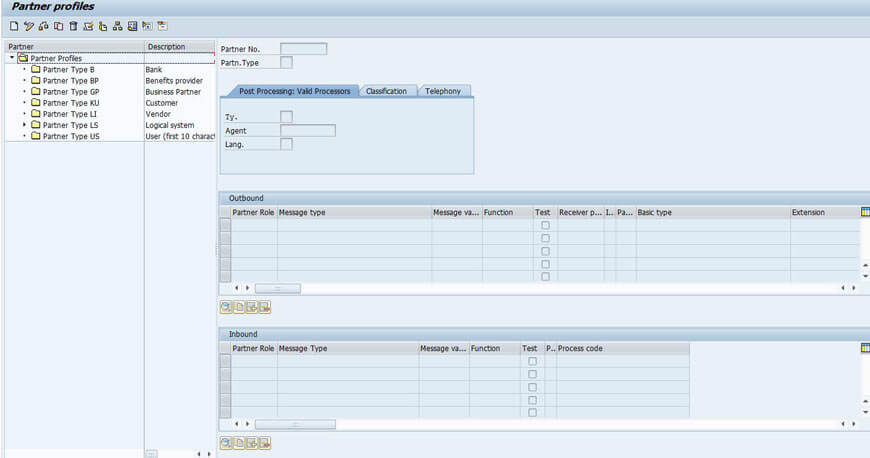

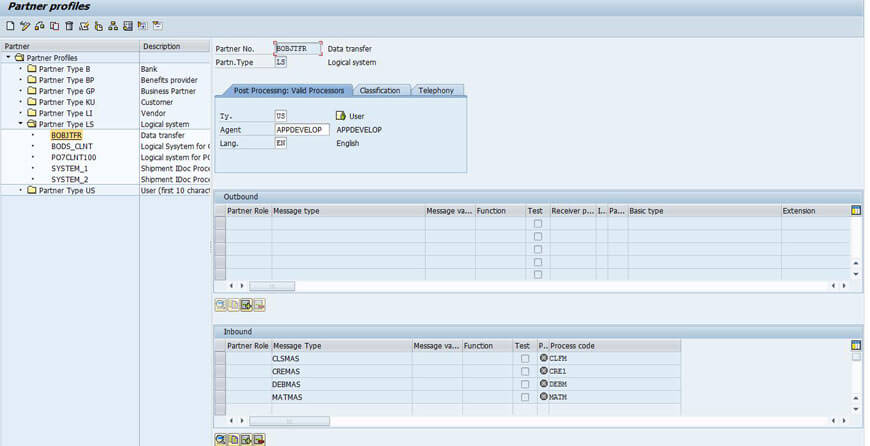

Define Partner profile

Go to TCode - WE20 and click on create button or F5 button.

Now enter the partner number as the logical system name that we have created and partner type as LS (Logical System). In the "Post processing: Valid processors" tab give the type as "US" and agent as the user name with which we have logged on (can be taken from search help) and give the language. After entering these values click on save. Addition of inbound as well as outbound parameters will be active only after saving.

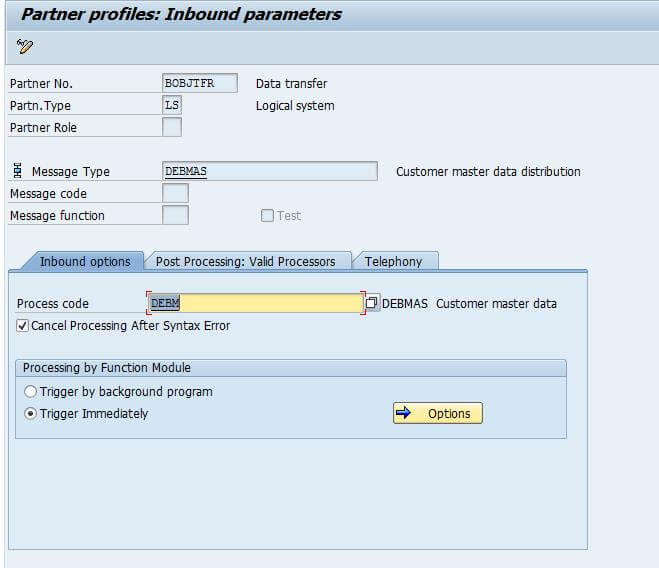

In the inbound parameters, add all the message type we have to use. For example, if we have to send the customer master data IDoc then the corresponding message type DEBMAS should be added in the inbound parameters.

Once you complete all the required configurations and run the job from BODS, data is loaded into S/4 HANA